The website is not being found at all, often because the site does not exist, or your internet connection is not reachable. Reason: The SEO Spider obeys disallow robots.txt directives by default. Things to try: Set the SEO Spider to ignore robots.txt (Configuration > Robots.txt > Settings > Ignore Robots.txt) or use the custom robots.txt configuration to allow crawling.

Things to check: What is being disallowed in the sites robots.txt? (Add /robots.txt on subdomain of the URL crawled).

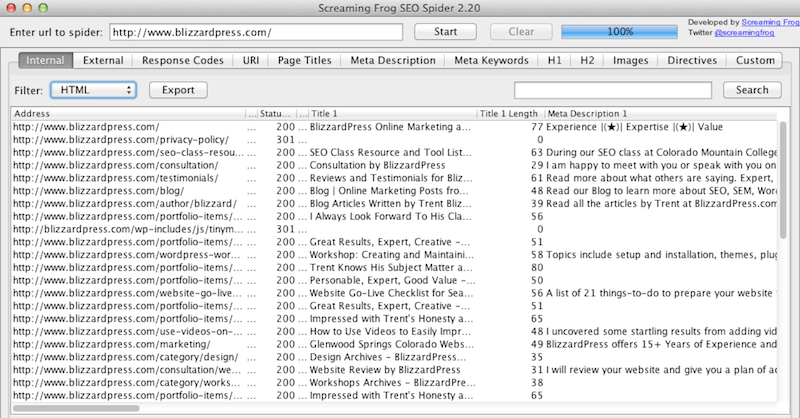

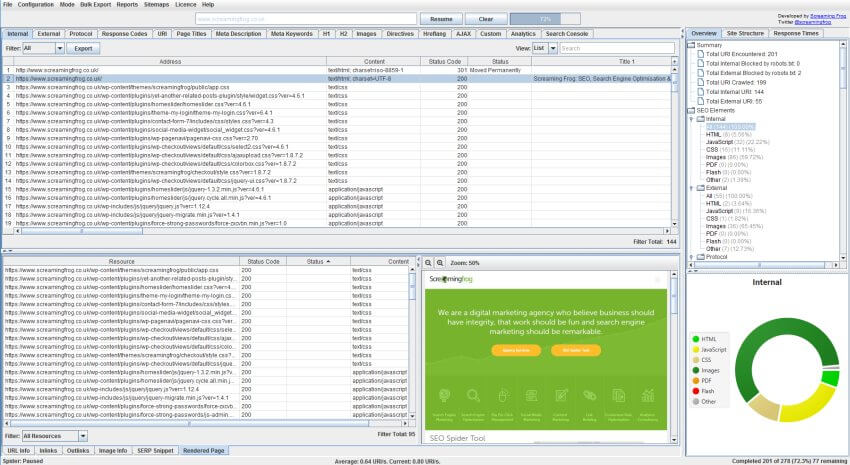

Hence, the actual HTTP response is not seen due to the disallow directive. In this case this shows the robots.txt of the site is blocking the SEO Spider’s user agent from accessing the requested URL. The status provides a clue to exactly why no status was returned. The most common status codes you are likely to encounter when a site cannot be crawled and the steps to troubleshoot these, can be found below:ģ01 – Moved Permanently / 302 – Moved TemporarilyĤ00 – Bad Request / 403 – Forbidden / 406 – Status Not Acceptableĥ00 – Internal Server Error / 502 – Bad Gateway / 503 – Service UnavailableĪny ‘0’ status code in the Spider indicates the lack of a HTTP response from the server. When a URL is entered into the SEO Spider and a crawl is initiated, the numerical status of the URL from the response header is shown in the ‘status code’ column, while the text equivalent is shown in the ‘status’ column within the default ‘Internal’ tab view e.g. HTTP Status Codes Crawling With The Screaming Frog SEO Spider If the Screaming Frog SEO Spider only crawls one page, or does not crawl as expected, the ‘Status’ and ‘Status Code’ are the first things to check to help identify what the issue is.Ī status is a part of Hypertext Transfer Protocol (HTTP), found in the server response header, it is made up of a numerical status code and an equivalent text status.

0 Comments

Leave a Reply. |

Details

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed